The End of Human-Only Assumptions

Every CI/CD pipeline was designed around one implicit assumption: a human wrote the code with conscious intent. AI agents—like GitHub Copilot Workspace, Devin, and Claude Code—break this assumption. They are already writing features, fixing CI jobs, and modifying infrastructure at speed.

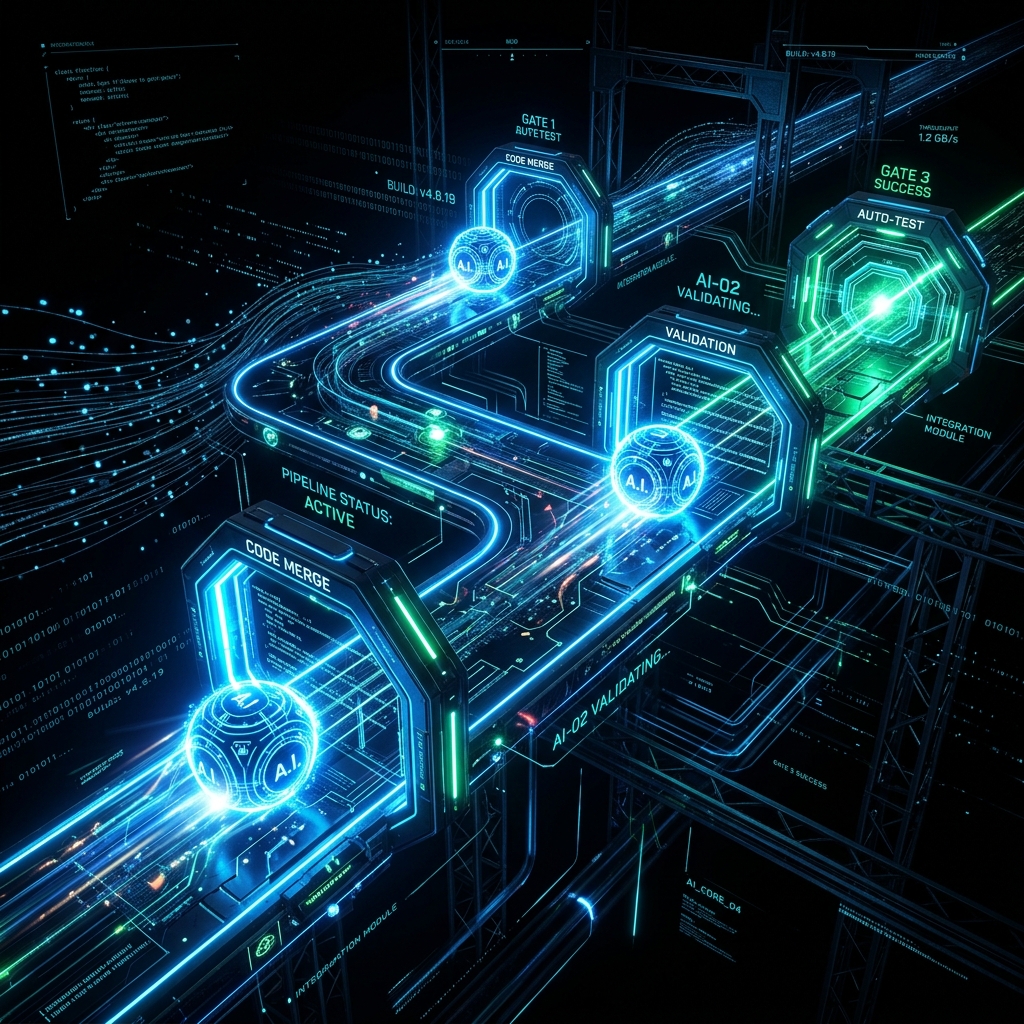

When AI agents are in the loop, the CI/CD pipeline stops being a quality gate and becomes a governance layer. Traditional review ensures that human-reviewed code is consistent; AI-aware pipelines must catch emergent issues that only appear when agentic code interacts with a broader system.

Building an AI-Aware Pipeline

An AI-aware pipeline requires four fundamental additions to the standard DevOps workflow:

1. Provenance and Attribution

Every AI-generated change must be tagged at commit time with metadata: which model produced it, what prompt triggered it, and which human authorized it. Frameworks like SLSA and tools like Sigstore/Gitsign provide the necessary cryptographic chain of custody.

2. AI-Specific Static Analysis

Standard SAST tools catch known vulnerabilities, but AI can produce novel, semantically incorrect patterns. Pipelines need Semgrep rules tuned for LLM failure modes (hallucinated APIs, incorrect error propagation) and OPA/Conftest to enforce architectural invariants.

3. Hallucinated Dependency Detection

LLMs occasionally invent plausible but non-existent package names—a major risk for dependency confusion attacks. Mitigation requires strict lockfile enforcement and treating any unrecognized dependency in an AI PR as a high-priority human review item.

4. Intent-Oriented Human Review

When agents write code, human review changes character. It is no longer about mechanical correctness (tests handle that). It is about intent alignment: Should this feature exist? Does this infrastructure change match our architectural direction? The reviewer moves from checking the implementation to checking the decision.

Risk Modeling for Agentic Development

Organizations must explicitly model three primary agentic risks:

- The Compromised Agent: An agent manipulated via prompt injection to introduce backdoors.

- The Confident Hallucination: An agent implementing a security algorithm incorrectly while providing readable but false documentation.

- The Runaway Agent: An agent with broad permissions making individually reasonable decisions that lead to a collectively catastrophic outcome (e.g., mass resource deletion).

Summary: The GitOps Connection

GitOps is the governance structure that makes AI agents safe at scale. By making Git the single source of truth, every change an agent makes—from spinning up servers to updating manifests—becomes a versioned, reviewable pull request.

The pipelines we built for humans aren’t wrong; they are incomplete. The transition to AI-aware CI/CD is the organizational decision to treat agent governance as a first-class engineering concern before something goes wrong.